Announcing the Peter Picture of the Day

Peter Quinn Metcalf was born January 27th, 2011, at 8:56pm. I’m pleased to announce the Peter Picture of the Day, a photoblog featuring pictures of Peter. It’s modeled after his brother’s photoblog, the Matthew Picture of the Day, which has been running for three and a half years. Although the blogs look alike, there are a few subtle changes to the PPOD, some of which I hope to incorporate into the MPOD.

Most notably, the full scale picture is now available: simply click on the picture and you will be able to download the full picture. This should make for much better prints for those who wish to print out PPOD pictures. Warning: if uncropped, these pictures are about 3MB apiece. That seemed much too large back when I established the MPOD, and so it has used the display size only of 720 pixels wide. But these days, a 3MB image doesn’t seem so unreasonable.

I’m also now posting the date that each photograph was taken (a feature I should be able to add soon to MPOD), and I’m also putting the comments section on the main page, instead of in a pop-up window. As with MPOD, each commenter’s first comment must be approved by the moderator (me), to avoid spam postings.

I’ve loaded a couple of pictures to start things off, after which there should be one new picture each day, posted at about 6am Eastern Time.

January 29, 2011 No Comments

The New Sierra Club Executive Director

A recent (January 30, 2010) episode of Sierra Club Radio begins with an interview of the new executive director, Michael Brune, which was the first I’d heard in detail about him. I found it quite encouraging, in part because of what Mr. Brune said, but more refreshingly, in his tone. The outgoing executive director, Carl Pope, periodically recorded commentaries for Sierra Club radio, which I never really cared for. Mr. Pope’s tone was brash, smug, and confrontational, and his messages were needlessly political: hyping up small or incidental or Phyrric victories, spinning away the setbacks, never allowing that an issue might have subtleties and complications. There were good guys and villains, and the good guys were always winning, most likely thanks to the Sierra Club and its allies. Carl Pope’s commentaries always sounded like a slightly disingenuous pitch. Mr. Brune, by contrast, sounds very much like a thoughtful person.

That I picked up on this contrast is perhaps a bit ironic, as Mr. Brune as an activist was known for a rather confrontational style, as elucidated in a Living on Earth interview, a KQED Forum interview, and in a Grist article. Prior to the Sierra Club, Mr. Brune was executive director of Rainforest Action Network, where his most notorious stunt involved the campaign to get Home Depot to stop buying wood from endangered forests. Sympathetic Home Depot employees contacted Mr. Brune and clued him in to the code for the Home Depot intercom system, by which Rainforest Action Network activists could go into any Home Depot, find the intercom stations, and broadcast messages storewide about the source of the wood products for sale. This campaign worked, although I’m not really sure this is the sort of thing I’d like the Sierra Club to start doing more of.

Mr. Brune made what I think is a salient and subtle point in praising the Sierra Club for “evolving” over the past decade or so, of doing a good job of “holding onto its roots”–protecting wild places and the like–but at the same time “being responsive to the great threat of climate change.” This phrasing speaks to me–it signals an understanding that the environmental challenges we face and our responses to them are not identical to those of twenty or thirty years ago. Urban environmentalists often note a disconnect with what we might call “traditional” environmentalism, manifested as an insistence in saving every tree, and in opposing every development, and in always primarily characterizing the principals involved in any development as greedy, even if the trees that would have to be cleared to make way for a development would enable its future residents to live in ways–without cars, for example–that could drastically reduce their overall environmental impact when compared with what they might need to end up with should the traditional environmentalist’s protests be successful and should the greedy developers choose to build their buildings instead on some further-flung plot of land that’s less dear to said environmentalists. I’m oversimplifying the issue here of course, and I don’t want to presume that when Mr. Brune says the Club is evolving that he necessarily means that it will evolve exactly the way I want it to. But it does seem to me that Mr. Brune is acknowledging the need to look at environmental challenges in a different way than has been considered traditional.

Two years ago, he wrote Coming Clean–Breaking America’s Addiction to Coal and Oil, published by Sierra Club books. That energy was the topic on the mind of someone employed to save the rain forests is, itself, encouraging. He was interviewed on Sierra Club radio for September 6, 2008 upon release of the book, and this earlier interview is perhaps more insightful than the current one. He struck a thoughtful and diplomatic tone, giving respectfully detailed answers to complex topics. He discussed, at some length, the problems with biofuels, beginning with a remark that the idea of growing your own fuels is, no doubt, very alluring. He concludes that “biofuels can only at best be part of the solution” and further noting that if we were to turn every single last ear of corn produced in the United States into ethanol, it would provide a scant 12% of our fuel needs. He resists the temptation to simply classify biofuels as “good” or “bad,” and he uses a quantitative figure in a proper and meaningful way, which is more than can be said of much of what passes for environmental discourse these days. The urbanist will also note that he also understands that biofuels are an attempt at a solution to what is in many respects the wrong question–instead of asking how we’re going to keep fueling our cars in a post-carbon age, we should also be asking whether we need so many cars to begin with. Brune mentions, several times, that “we need to promote ways of transportation that are not centered on the automobile.” When asked for ways in which individuals could get involved in breaking our oil addiction, he suggested getting involved with your local bicycle advocacy organization, to get more bike lanes and to encourage office buildings to offer bicycle parking.

It also appears–although not having read his book, I’m not certain–that he wants to play down the role of individuals greening their own lives and instead look towards action for large-scale, widespread institutional change. In the 2008 interview, the host specifically asks him about a claim in the book that individual actions, like turning down your thermostat and changing out your lightbulbs, won’t be sufficient to solve the climate change problem. And although he’s not as polemic as Mike Tidwell’s Washington Post Op-Ed, the sentiment is the same: to make the changes that matter, we need large scale, collective action. And Brune makes clear in the current interview that be believes that there is no organization better suited to lead this action than the Sierra Club.

Brune, I couldn’t help but notice, is only two years older than I am, and is probably as young as one can be to also have enough experience to be considered a reasonable candidate for executive director of an organization with the size and stature of the Sierra Club. Carl Pope, I gather, is a few years younger than my parents. So there really is a transition here, a passing of the baton from one generation to the next. I’m optimistic about Brune, and will watch carefully to see where the Club goes.

February 8, 2010 1 Comment

Making sense of the March Meeting

This year’s March Meeting will be in Portland, Oregon. (See previous blog posts from 2009 and 2008, also here.) The largest of the meetings put on by the American Physical Society, this year it there will be 581 sessions and 818 invited speakers. Most time blocks–from Monday morning through Thursday mid-day–will have a full program of 42 parallel sessions. This is slightly larger than last year, in which most time blocks had 41 parallel sessions, with a total of 562 sessions. There were 832 invited speakers last year, so this year has slightly fewer. As each session can have up to 15 contributed talks–10 minutes each with 2 minutes for questions and changing speakers, or 5 invited talks–30 minutes each with 6 minutes for questions and changing speakers, that means there could be almost 6800 talks all total, but most sessions aren’t completely programmed. This year, I am not giving a talk.

With such a mass of talks going on, planning your time at the conference and deciding how long to stay take some effort. In the past, abstracts for all talks, and the 2000 or so posters that the meeting has each year and were printed in two volumes that resembled phone books, plus a pocket sized book of session titles. These days, one gets a smaller books that lists only the titles of the talks, and of course the entire program is also available online. Nevertheless, over the years I’ve found the layout of the program to be a bit wanting, and so I put together some scripts that parse the schedule and author information to print it out in a form that I find easier to work with.

My scripts and their outputs have evolved over several years, and currently they produce three files. They take as input the Epitome, which is a chronological list of sessions, and the list of invited speakers. At present, both of these must be cut-and-pasted from the meeting website into text files for my program to read. (Maybe next year I’ll have them automatically grab the files from the APS website.)

The first output file is a version of the Epitome more suitable for browsing on a printed page than the materials for APS. It skips the non-talk sessions (like receptions and unit business meetings) and makes sure all sessions of each time block are together on a single page.

The second file serves to give a sense of the structure of the March Meeting: it is a grid of time blocks versus session numbers, with symbols indicating the number of invited talks in each session. It also has a list of room numbers associated with each session number: for the most part, all sessions of a certain number (such as A14, B14, D14, and so on) will be in the same room, but not always.

The third file is a list of invited talks, sorted by session instead of by author last name. Because of the large number of parallel sessions and the high likelihood of schedule conflicts, I think it makes sense to look time block by time block.

Since this year I’ve actually got these files produced well before the meeting, I’m posting them here in case anyone else should like to use them too.

Here are the three PDF files I’ve generated:

If you like the information but want to fiddle with the formatting, here are the .tex files that generated the PDFs, that need to be run through LaTeX. Because of a quirk in WordPress, they’re all saved as .tex.txt. The APS online information already uses TeX formatting for accent marks in speaker names, and for super- and sub-scripts in talk titles. (There are occasionally errors in APS’s TeX formatting–this year, the title of Philip Anderson’s talk is missing the math mode $ characters surrounding the ^3 superscript command. Despite my efforts to automate everything with these scripts, fixes like this still must be done by hand.)

Finally, here is the Tcl script that I use to parse the files and write the .tex files. It’s not very good code, having been mucked around with once a year for a few years and in general cobbled together from earlier scripts. It works, provided the settings file is appropriately edited, on march meeting files back to 2006, when the invited speaker list was first published online. The bits that work on the epitome work on 2005, and the epitome format in 2004 and earlier years was different. As with the .tex files, I’ve needed to upload them as tcl.txt files here.

In a future post, I hope to use the results of the scripts, particularly the grid, to analyze ways in which the March Meeting has changed over the years.

January 31, 2010 1 Comment

Travels with our toddler

We recently took Matthew on his first overnight train trip; regular viewers of the Matthew Picture of the Day can expect a couple of shots from on board. We took the Capitol Limited all the way to and from Chicago, in a bedroom in a sleeping car. As national network trains go, this is quite a convenient one–although it takes 17 hours to travel the 780 rail miles, via Pittsburgh and Cleveland, most of that is at night, and once you factor in time to eat dinner and breakfast and time to get ready for bed and to get dressed, there’s not that much idle time left. Matthew did well, and the train again proved to be a civilized and relaxing way to travel. He’s old enough to get some fascination from looking out the train window, which is quite an improvement from his previous trip, when he was one, when we went to New York to buy my Brompton. So all total, Matthew now has 2012 Amtrak miles.

Matthew, though, has logged more mileage in the air than by any other means: to date, 29063 miles in 26 segments. Most of this has gone well. We’ve always bought him a seat, even when he was young enough to travel as a “lap child.” In the past, it was common to travel with a young child as a “lap child” and then use an empty seat for him while aboard the airplane, but in recent years, there is no such thing as an empty seat, and lap children must almost always actually be carried on a grown-up’s lap for the whole flight. My advice, then, is not to count on there being an empty seat, but rather, to count on there not being an empty seat, and if you can at all afford it, buy the seat for the child.

January 24, 2010 No Comments

Our inadvertent pumpkin patch

Although you wouldn’t be able to tell by looking at it–in fact, you might be tempted to conclude the opposite–I really do want there to be a recognizable garden someday in what can only honestly be called our yard. I dream about growing flowers and vegetables, and a rain garden and maybe blueberries and an apple tree. But, for a variety of reasons, I haven’t done anything and can barely keep up with mowing and controlling the weeds.

But it turns out you can grow things using the lackadaisical approach, and in our case, it’s pumpkins.

This is how the pumpkin plant looked in September.

The truly amazing bit is that I didn’t ever plant any pumpkin seeds. The pumpkin vine grew out of a side vent in our compost bin, presumably from a pumpkin seed that germinated instead of decomposing while inside the bin.

Since I didn’t plant these, I don’t actually know which variety of pumpkin they are, but I presume they are the inedible jack-o-lantern type from Halloween 2008. As with everything else in the yard, I didn’t tend to these, so they didn’t grow nearly as large as a proper jack-0-lantern would. Indeed, the pumpkins feel solid, not hollow.

I “harvested” them recently, although too late to be a part of our Halloween decorations. But, for the record, here is our garden output 2009:

November 10, 2009 2 Comments

Confounded smoke alarms

My electrician, who is safety-conscious above all else, has been bugging me for years now about smoke alarms. Sure, I have several battery-powered smoke alarms up, but from a safety improvement per dollar spent perspective, one really wants smoke alarms that are:

- hard-wired, with

- battery backup, and

- interconnected

The batteries in battery-powered smoke alarms will run out. They do chirp to let us know it’s time to change the battery, but more often than not I won’t have a spare battery handy, or I won’t have a step stool nearby, or it will be the middle of the night, so instead of going back on the ceiling with a new battery like it’s supposed to, the alarm will sit around on a counter, battery-less, sometimes for weeks. Hard-wired smoke alarms solve the dead battery problem because they draw their power from the house electrical wiring. As it turns out, electrical fires that disrupt the power before smoke could be detected are really rare, and our power is pretty reliable, so the risk that the power’s off when the alarm needs to sound is really quite small, smaller than the risk that your battery-powered alarm will be sitting, battery-less, on the counter. And most hard-wired alarms also have battery backup, so you’re covered during power outages, too.

There are two smoke-detection technologies: ionization and photo-electric. Ionization sensors do well with small smoke particles, from fast-burning fires, while photoelectric sensors do better with large smoke particles from smoldering fires. Most safety recommendations (including Consumer Reports) are reluctant to specify one as being a better choice, and recommend both. So add to our wish-list:

- dual-sensor

Interconnection of smoke alarms means that when one alarm goes off, all of them sound. So if there’s a fire in the basement while you’re asleep, the alarm in your second-floor bedroom will also go off, giving you much more time to escape than waiting either for enough smoke to set off a second-floor alarm or for you to hear the far-away alarm. The interconnection is conventionally done with three-conductor wiring: all the smoke alarms need to be installed on the same circuit and the third wire is used as the alarm interconnection signal wire. This is easy in new construction but really hard to retrofit: getting a new circuit to the ceiling of every location for a smoke alarm would mean lots and lots of holes in the walls and ceilings.

June 7, 2009 1 Comment

To re-use plastic baggies

I get a fair number of yuppie housewares catalogs in the mail. I browse through them–I actually do like the style of much of their merchandise–but rarely do I actually buy anything. The catalogs want to sell you on the idea that simply buying a decorative plate will transform your whole dining room into something as stylish as that put together for the catalog shoot, and I understand that it won’t.

Of all the yuppie housewares catalogs, NapaStyle is one of the yuppiest, to the point of almost being a laughable self-parody. But I’m writing here about something I bought from them (a NapaStyle exlcusive, even) that’s turned out to be quite a satisfying purchase: the Stemware & Plastic Baggie Dryer. I hate to throw away plastic Zip-Lock bags after just one use; far better to wash and re-use them. This device is a ring of eight wood rods that make excellent places to hang plastic baggies to dry.

Of course, one doesn’t need a drying rack to wash and re-use plastic baggies, but I wasn’t regularly doing so until I bought this drying rack. The drying rack works very well for its task. It’s also a very unassuming product: it does not need to have its own box: it was simply placed inside the shipping box. It was not enclosed in a plastic bag, it was not packed with custom-fit styrofoam. It was not tied to a piece of cardboard with twist-ties. It required no assembly. It came with no manual, no marketing survey disguised as a warranty card, and no safety warnings. It has no website. You can’t get on it’s email list for exciting product updates. It’s made almost entirely of wood. It was made in Canada.

I wish more products were like that.

May 11, 2009 3 Comments

The city becomes beautiful again

The cherry blossoms have come and gone now: two weeks of blooming and four days at the peak. A few pictures of my son enjoying the blossoms made their appearance on the Matthew Picture of the Day. The blooms are the most dramatic signal of the arrival of spring: there are a handful of other plants that bloom one way or another before the cherry trees do, but the cherry trees go from bare branches to large masses of fluffy pinkish-white rather dramatically.

Now the blossoms have blown away, and trees of every type are getting their leaves, and for a week or so the trees are all decorated in Spring Green. I had known about the Crayola color Spring Green since childhood, but it wasn’t until I was living in Ithaca that, after a characteristically long winter, I really understood what it meant. The very light and yellowish green of the nascent leaves on the trees across the street from my apartment were Spring Green; it was finally spring.

So now we begin the six or seven months in which the foliage and blooms of the plants around us make the city beautiful. This is capped by a month or so of fall foliage, after which nature’s beautification fades, slowly, and the seasonal decoration takes over.

Between Thanksgiving and New Year’s, holiday lights make the otherwise bleak city beautiful. Strings of white lights outlining houses and filling in shrubs, some overdone, some very subtle: they compensate for the dwindling sunlight and dormant vegetation. In Ithaca, we got our first snow around Thanksgiving, here in DC it comes much later, usually in January. Snowfall is only very briefly beautiful, when it’s still piled up on otherwise bare branches, and while that on the ground hasn’t been disturbed very much. Then in a few hours, it drops from branches and twigs, and snowplows and other traffic have turned much of it into a dirty grey mush.

One thing I can’t understand is why it is that the holiday lights that made the streets seem so inviting in December look so tacky in the middle of January. The weather is the same, the hours of darkness are much the same, yet holiday lights, and the greens, golds, and reds of Christmas look fantastically out of place. I suppose we’ve been trained by the retail industry to appreciate bold reds and whites, à la Valentine’s day. Is there anyone who actually buys such seasonally-colored servingware from the yuppie housewares catalogs? And after Valentine’s day, as the dreary bleakness of winter presses on, we imagine spring in pastel colors. And then spring happens, like it’s happening now, here in DC.

April 18, 2009 1 Comment

March Meeting 2009

I’ve been back from the 2009 APS March Meeting for two weeks now and so the window of relevance for writing about it is rapidly closing. It was held in Pittsburgh this year, following the same format as last year. The meeting seems to be getting bigger each year: when I first attended, in 2003, there were about 5600 attendees; this year’s meeting drew 7000.

Sessions

For a number of years now I’ve taken the online Epitome and Invited Speaker List and run them through tcl scripts to make TeX files that give me speaker and session information in a format I think is more useful. This also allows me to look at overall meeting statistics: There were more sessions this year, 558, than in previous years; last year there were 517 sessions. What seems to be growing most sharply are invited talks: there were 825 this year, compared to about 730 in each of the previous three years. Not surprisingly, this corresponded to an increase in the number of sessions with 5 invited talks: there were 95 such sessions this year, about 75 in each of the previous 3 years, and only 15 back in 2005.

Reunion

I only stayed through Wednesday of the meeting this year, taking an evening flight back home. I was rather irritated to find that the Cornell alumni reunion was held on Wednesday night, instead of Tuesday, like it always had been. I don’t know if this had even been published before I made my travel reservations, although I don’t know that I would have stayed an extra night just for that.

Projection

The disappearance of viewgraphs now appears complete. I was one of a handful of holdouts who was still using viewgraphs as late as 2006. Last year, I only saw one talk given using viewgraphs and this year I saw zero. There are still overhead projectors in the rooms, but they are kept on the floor beside the table upon which the computer projector sits. It’s amusing to read the note in the 2002 newsletter of the Division of Condensed Matter Physics:

More and more scientists want computer projection for their talks. This past year, computer projectors were available in invited session rooms only. Projectors are very expensive (~$400/ day/session) and would raise the registration fee at the conference significantly if placed in all rooms. Also, set-up time between talks makes staying on a 12 minute schedule for contributed talks very problematic. APS will continue to increase the availability of computer projection, but will not commit totally to them until price and technical interfacing problems become more tractable.

To be sure, there are problematic computers and I did see talks where roughly half of the time was taken up with computer fiddling.

Context

On the topic of presentations, one thing that lots of speakers do, which really bugs me, is to show a graph of some raw data, usually a spectrum of something taken with a well-established experimental technique, but without giving any explanation. If I don’t use a technique myself, even if I know in principle how it works, I don’t know if it’s considered good or unexpected or interesting or disappointing if your graph has wiggles, or is flat, or has a bump in a particular place, or a big spike, or a big dip, or if it shifts a little as you twiddle some parameter, or shifts a lot. Context, my fellow physicists! Tell us what your measurement technique does, what shows up in your graph, what ordinary data would look like, and why your particular measurement is interesting.

Books

I also ended my one-year physics-book-buying drought. I buy interesting physics books knowing that I’m not also buying the time it takes to work through them. I have one book purchase from two years ago that I’ve made a concerted effort to actually work through, but am perhaps only 20% done with it. And it’s not even a very challenging book. But I went ahead this year anyway, and took advantage of Cambridge University Press’s Wednesday afternoon buy-2-get-50%-off sale to pick up an otherwise ridiculously overpriced Elasticity with Mathematica and Geometric Algebra for Physicists, and also bought Group Theory: Applications to the Physics of Condensed Matter.

On to Portland

I’m looking forward to visiting Portland for next year’s March Meeting. I consider Portland one of my favorite cities but in reality all I’ve only spend several hours there at a time, waiting to change trains. But with a streetcar and Powell’s, who couldn’t love Portland? I had been sure that, a couple of years ago, I also saw Seattle on the list of upcoming March Meeting locations, but it seems to be gone now.

April 2, 2009 No Comments

DC intersections with Mathematica

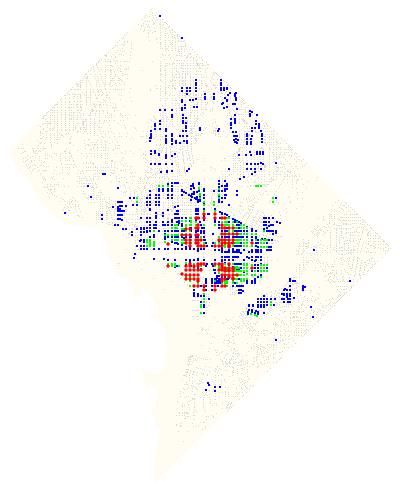

Without the quadrant designation, several intersections in Washington–“6th and C,” for example–are ambiguous. “6th and C” can refer to a place in NW, NE, SW, or SE DC. Because of this duplication of streets and intersections, the quadrant is usually–but not always–specified. I’ve been curious for some time to know exactly how many doubly-, triply-, and quadruply-redundant intersections there are in DC, and it’s another fun example combining Mathematica 7‘s .shp file import with the GIS data that the DC government makes available.

How many are there? I calculate:

| Quadrants: | 2 | 3 | 4 |

| Intersections: | 418 | 71 | 28 |

The 28 intersections that appear in all 4 quadrants are:

14th & D, 9th & G, 7th & I, 7th & E, 7th & G, 7th & D, 6th & C, 6th & G, 6th & D, 6th & I, 6th & E, 4th & M, 4th & G, 4th & E, 4th & D, 4th & I, 3rd & M, 3rd & C, 3rd & K, 3rd & D, 3rd & G, 3rd & E, 3rd & I, 2nd & E, 2nd & C, 1st & M, 1st & C, 1st & N

Plotted on a map:

Color coded map of intersections in DC.

Update: Here’s a larger PDF version.

Here’s how I made the map and did the calculations:

March 1, 2009 4 Comments